- INSTALL APACHE SPARK ON VMWARE INSTALL

- INSTALL APACHE SPARK ON VMWARE UPDATE

- INSTALL APACHE SPARK ON VMWARE SOFTWARE

- INSTALL APACHE SPARK ON VMWARE DOWNLOAD

In this use case NVIDIA RAPIDS is run with Apache Spark 3Īnd validated with a subset of TPC-DS queries. Values from multiple records take advantage of modern GPU designs andĪccelerate reading, queries, and writing. With the GPU DataFrame, batches of column Memory format, optimized for data locality, to accelerate analytical processing Arrow specifies a standardized, language-independent, columnar RAPIDS offers a powerful GPU DataFrame based on Apache Arrowĭata structures. Now we will look at the first use case leveraging GPUs with Apache Spark 3 and NVIDIA RAPIDS for transaction processing.

INSTALL APACHE SPARK ON VMWARE INSTALL

Today, we saw how our Support Engineers install Apache spark on a single Ubuntu system.In part 1 of the series we introduced the solution and the components used.

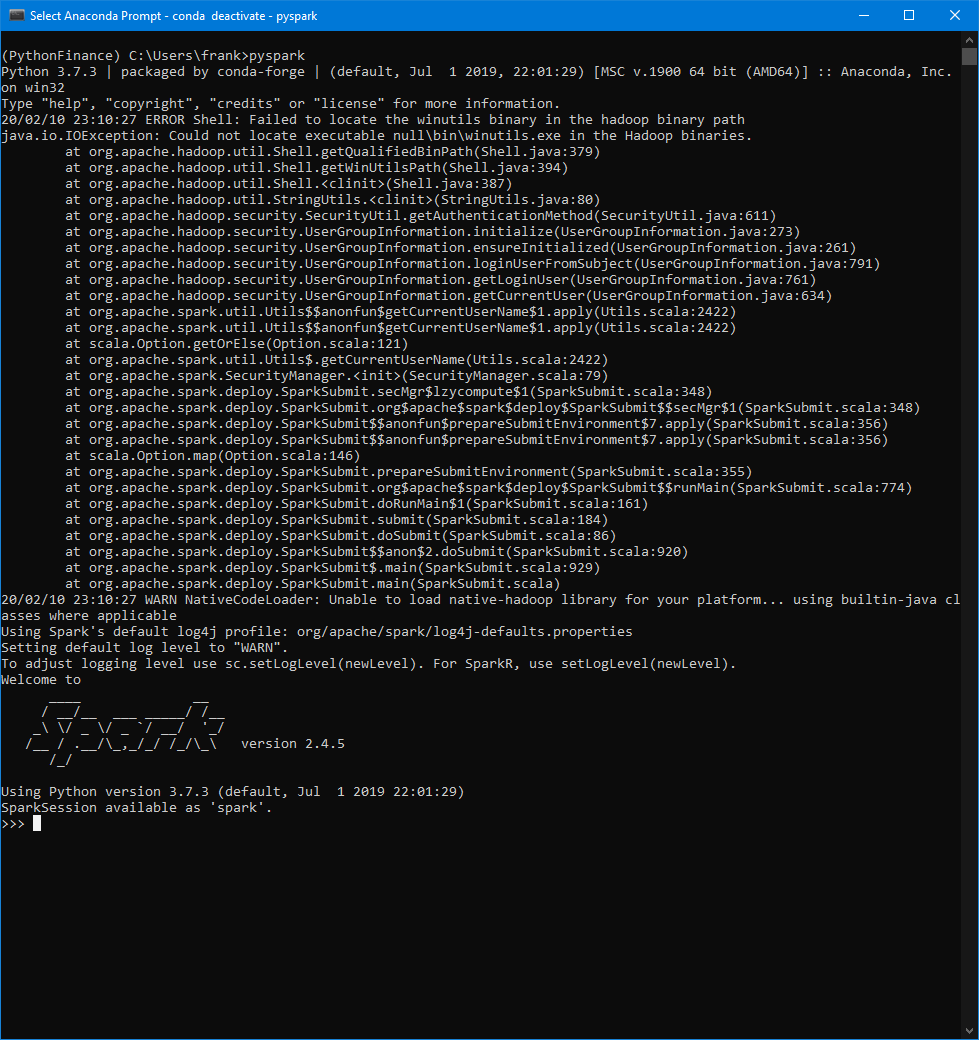

In short, Apache Spark is a distributed open-source, general-purpose framework used in cluster computing environments for analyzing big data. If it becomes necessary for any reason to turn off the main and worker Spark processes, run the following commands: :~# stop-slave.sh To test out pyspark run the following command. Exit the current Spark Shell by holding the CTRL key + D. The Spark Shell is not only available in Scala but also Python. In the terminal, run the following command to open the Spark Shell. The web interface is handy, but it will also be necessary to ensure that Spark’s command-line environment works as expected. Now that the worker is running, it should be visible back in the web interface. Back in the terminal to start up the worker, run the following command, pasting in the Spark URL from the web interface.

For this reason, the worker process will also be started on this server. In this case, the installation of Apache Spark is on a single machine. It should now be possible to view the web interface from a browser on the local machine by visiting Once the web interface loads, copy the URL as it will be needed in the next step. Logout of the server and then run the following command replacing the hostname with the server’s hostname or IP address: ssh -L 8080:localhost:8080 To view the web interface, it is necessary to use SSH tunneling to forward a port from the local machine to the server. This can be done with the command: :~# start-master.sh Now that we have configured the environment, the next step is to start the Spark master server. To ensure that these new environment variables are accessible within the shell and available to Apache Spark, it is also necessary to run the following command. :~# echo "export PYSPARK_PYTHON=/usr/bin/python3" > ~/.profile :~# echo "export PATH=$PATH:/opt/spark/bin:/opt/spark/sbin" > ~/.profile profile file by running the following commands: :~# echo "export SPARK_HOME=/opt/spark" > ~/.profile First, set the environment variables in the. :~# mv spark-3.0.1-bin-hadoop2.7/ /opt/spark Configure the Environmentīefore starting the Spark master server, we need to configure a few environmental variables. :~# wget Īfter completing the download, extract the Apache Spark tar file using this command and move the extracted directory to /opt: :~# tar -xvzf spark-*

INSTALL APACHE SPARK ON VMWARE DOWNLOAD

Download Apache Spark using the following command. The Mirrors with the latest Apache Spark version can be found on the Apache Spark download page. Now that the dependencies are installed in the system, the next step is to download Apache Spark to the server. Let us now discuss each of these steps in detail. The steps to install Apache Spark include: The output prints the versions if the installation completed successfully for all packages. We can now verify the installed dependencies by running these commands: java -version javac -version scala -version git -version This can be done with the following command: :~# apt install default-jdk scala git -y Step to install dependencies includes installing the packages JDK, Scala, and Git.

INSTALL APACHE SPARK ON VMWARE UPDATE

Before we start with installing the dependencies, it is a good idea to ensure that the system packages are up to date with the update command. The first step in installing Apache stark on Ubuntu is to install its dependencies. It can easily process and distribute work on large datasets across multiple computers. Let us today discuss the steps to get the basic setup going on a single system.Īpache Spark, a distributed open-source, general-purpose framework helps in analyzing big data in cluster computing environments.

INSTALL APACHE SPARK ON VMWARE SOFTWARE

The process of Apache Spark install on Ubuntu requires some dependency packages like JDK, Scala, and Git installed on the system.Īs a part of our Server Management Services, we help our Customers with software installations regularly.